这是一份manchester曼切斯特大学 MATH10282作业代写的成功案例

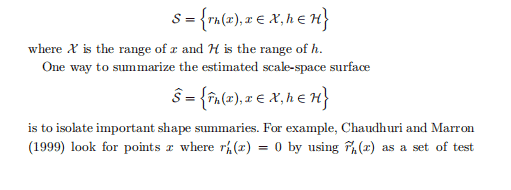

where $K$ is any one-dimensional kernel. Then there is a single bandwidth parameter $h$. At a target value $x=\left(x_{1}, \ldots, x_{d}\right)^{T}$, the local sum of squares is given by

$$

\sum_{i=1}^{n} w_{i}(x)\left(Y_{i}-a_{0}-\sum_{j=1}^{d} a_{j}\left(x_{i j}-x_{j}\right)\right)^{2}

$$

where

$$

w_{i}(x)=K\left(\left|x_{i}-x\right| / h\right)

$$

where $K$ is any one-dimensional kernel. Then there is a single bandwidth parameter $h$. At a target value $x=\left(x_{1}, \ldots, x_{d}\right)^{T}$, the local sum of squares is given by

$$

\sum_{i=1}^{n} w_{i}(x)\left(Y_{i}-a_{0}-\sum_{j=1}^{d} a_{j}\left(x_{i j}-x_{j}\right)\right)^{2}

$$

where

$$

w_{i}(x)=K\left(\left|x_{i}-x\right| / h\right)

$$

The estimator is

$$

\widehat{r}{n}(x)=\widehat{a}{0}

$$

MATH10282 COURSE NOTES :

Generally one grows a very large tree, then the tree is pruned to form a subtree by collapsing regions together. The size of the tree is a tuning parameter chosen as follows. Let $N_{m}$ denote the number of points in a rectangle $R_{m}$ of a subtree $T$ and define

$$

c_{m}=\frac{1}{N_{m}} \sum_{x_{i} \in R_{m}} Y_{i}, \quad Q_{m}(T)=\frac{1}{N_{m}} \sum_{x_{i} \in R_{m}}\left(Y_{i}-c_{m}\right)^{2}

$$

Define the complexity of $T$ by

$$

C_{\alpha}(T)=\sum_{m=1}^{|T|} N_{m} Q_{m}(T)+\alpha|T|

$$