这是一份umass麻省大学 MATH 651作业代写的成功案例

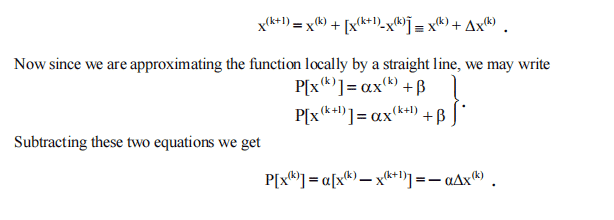

Let us begin by restricting the range to $-1 \leq x \leq 1$ and taking the simplest possible weight function, namely

$$

w(x)=1

$$

so that equation becomes

$$

\frac{d^{2 i+1}}{d x^{2 i=1}}\left[U_{i}(x)\right]=0

$$

Since $U_{i}(x)$ is a polynomial of degree $2 i$, an obvious solution which satisfies the boundary conditions is

$$

U_{i}(x)=C_{i}\left(x^{2}-1\right)^{i} .

$$

Therefore the polynomials that satisfy the orthogonality conditions will be given by

$$

\phi_{i}(x)=C_{i} \frac{d^{i}\left(x^{2}-1\right)^{i}}{d x^{i}}

$$

If we apply the normalization criterion we get

$$

\int_{-1}^{+1} \phi_{i}^{2}(x) d x=1=C_{i} \int_{-1}^{+1}\left[\frac{d^{i}\left(x^{2}-1\right)}{d x^{i}}\right] d x

$$

so that

$$

\mathrm{C}_{\mathrm{i}}=\left[2^{\mathrm{i}} \mathrm{i} !\right]^{-1}

$$

MATH651 COURSE NOTES :

Let us begin by considering a collection of $\mathrm{N}$ data points $\left(\mathrm{x}{\mathrm{i}}, \mathrm{Y}{\mathrm{i}}\right)$ which are to be represented by an approximating function $f\left(a_{j}, x\right)$ so that

$$

f\left(a_{j}, x_{i}\right)=Y_{i}

$$

Here the $(\mathrm{n}+1) a_{j}$ ‘s are the parameters to be determined so that the sum-square of the deviations from $Y_{i}$ are a minimum. We can write the deviation as

$$

\varepsilon_{\mathrm{i}}=\mathrm{Y}{\mathrm{i}}-\mathrm{f}\left(\mathrm{a}{\mathrm{j}}, \mathrm{X}{\mathrm{i}}\right) $$ The conditions that the sum-square error be a minimum are just $$ \frac{\partial \sum{i}^{N} \varepsilon_{i}^{2}}{\partial a_{i}}=2 \sum_{i=1}^{N}\left[Y_{i}-f\left(a_{j}, x_{i}\right)\right] \frac{\partial f\left(a_{j}, x_{i}\right)}{\partial a_{j}}=0, \quad j=0,1,2, \cdots, n

$$