这是一份Sydney悉尼大学MATH1005/MATH1905的成功案例

$$

P\left(\beta_{j} \mid \delta_{j}\right)=\delta_{j} P\left(\beta_{j} \mid \delta_{j}=1\right)+\left(1-\delta_{j}\right) P\left(\beta_{j} \mid \delta_{j}=0\right),

$$

whereby $\beta_{j}$ has a relatively diffuse prior when $\delta_{j}=1$ and $X_{j}$ is included in the usual way, but for $\delta_{j}=0$ the prior is centred at zero with high precision, so that while $X_{j}$ is still in the regression, it is essentially irrelevant to that regression. For instance, if

$$

\left(\beta_{j} \mid \delta_{j}=1\right) \sim N\left(0, V_{j}\right),

$$

one might assume $V_{j}$ large, leading to a prior that allows a search among values that reflect the predictor’s possible effect, whereas

$$

\left(\beta_{j} \mid \delta_{j}=0\right) \sim N\left(0, c_{j} V_{j}\right),

$$

where $c_{j}$ is small and chosen so that the range of $\beta_{j}$ under $P\left(\beta_{j} \mid \delta_{j}=0\right)$ is confined to substantively insignificant values. So the above prior becomes

$$

P\left(\beta_{j} \mid \delta_{j}\right)=\delta_{j} N\left(0, V_{j}\right)+\left(1-\delta_{j}\right) N\left(0, c_{j} V_{j}\right) .

$$

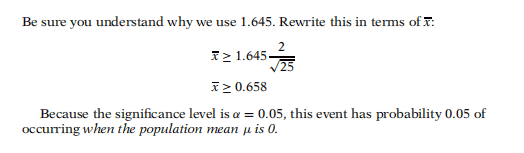

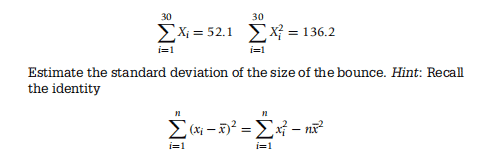

MATH1005/MATH1905 COURSE NOTES :

The ridge regression approach is closely related to a version of the standard posterior Bayes regression estimate, but with an exchangeable prior distribution on the elements of the regression vector. Thus in $y=X \beta+\varepsilon$, with $\varepsilon \sim N\left(0, \sigma^{2}\right)$, assume that the elements of $\beta$ are drawn from a common normal density

$$

\beta_{j} \sim N\left(0, \sigma^{2} / k\right) \quad j=2, \ldots, p,

$$

where a preliminary standardisation of the variables $x_{2}, \ldots, x_{p}$ may be needed to make this prior assumption more plausible. The mean of the posterior distribution of $\beta$ given $y$ is then

$$

\beta=\left(X^{\prime} X+k I\right)^{-1} X^{\prime} y .

$$

If the prior on $\beta$ specifies a location, as in

$$

\beta \sim N\left(\gamma, \sigma^{2} / k\right)

$$

122

REGRESSION MODEL.S

then the posterior mean of $\beta$ becomes

$$

\beta=\left(k / \sigma^{2}+X^{\prime} X / \sigma^{2}\right)^{-1}\left(k \gamma / \sigma^{2}+X^{\prime} y / \sigma^{2}\right) .

$$