We say that a trading strategy $\phi=\left(\phi^{1}, \phi^{2}\right)$ over the time interval $[0, T]$ is self-financing if its wealth process $V(\phi)$, which is set to equal

$$

V_{t}(\phi)=\phi_{t}^{1} S_{t}+\phi_{t}^{2} B_{t}, \quad \forall t \in[0, T],

$$

satisfies the following condition

$$

V_{l}(\phi)=V_{0}(\phi)+\int_{0}^{t} \phi_{u}^{1} d S_{u}+\int_{0}^{t} \phi_{u}^{2} d B_{u}, \quad \forall t \in[0, T]

$$

where the first integral is understood in the Itô sense and the second it the pathwise Riemann (or Lebesgue) integral.

It is, of course, implicitly assumed that both integrals on the right-hand side are well-defined. It is well known that a sufficient condition for this is that ${ }^{1}$

$$

\mathbb{P}\left{\int_{0}^{T}\left(\phi_{u}^{1}\right)^{2} d u<\infty\right}=1 \text { and } \mathbb{P}\left{\int_{0}^{T}\left|\phi_{u}^{2}\right| d u<\infty\right}=1

$$

We denote by $\Phi$ the class of all self-financing trading strategies. It follows from the example below that arbitrage opportunities are not excluded a priori from the class of self-financing trading strategies.

STAT4528 COURSE NOTES :

$$

c(s, t)=s N\left(d_{1}(s, t)\right)-K e^{-r t} N\left(d_{2}(s, t)\right)

$$

where

$$

d_{1}(s, t)=\frac{\ln (s / K)+\left(r+\frac{1}{2} \sigma^{2}\right) t}{\sigma \sqrt{t}}

$$

and $d_{2}(s, t)=d_{1}(s, t)-\sigma \sqrt{t}$, or explicitly

$$

d_{2}(s, t)=\frac{\ln (s / K)+\left(r-\frac{1}{2} \sigma^{2}\right) t}{\sigma \sqrt{t}} .

$$

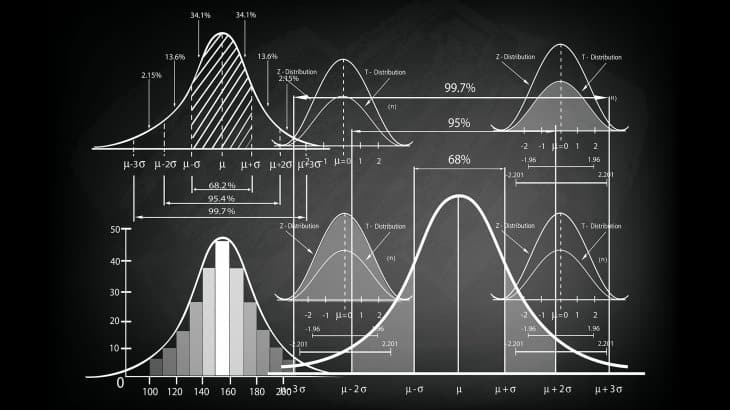

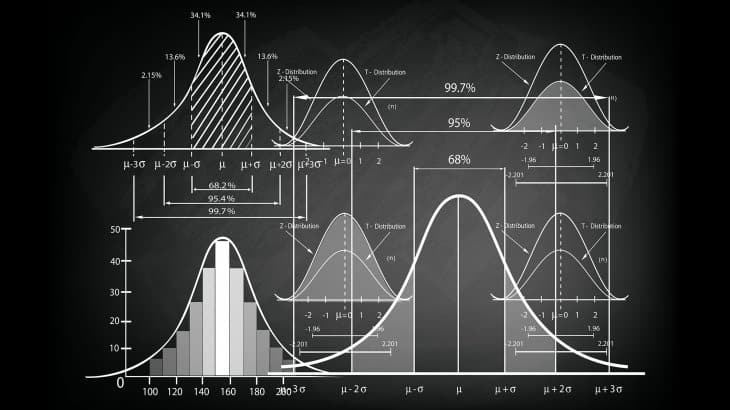

Furthermore, let $N$ stand for the standard Gaussian cumulative distribution function

$$

N(x)=\frac{1}{\sqrt{2 \pi}} \int_{-\infty}^{x} e^{-z^{2} / 2} d z, \quad \forall x \in \mathbb{R} .

$$

We adopt the following notational convention

$$

d_{1,2}(s, t)=\frac{\ln (s / K)+\left(r \pm \frac{1}{2} \sigma^{2}\right) t}{\sigma \sqrt{t}} .

$$

Let us denote by $C_{t}$ the arbitrage price at time $t$ of a European call option in the Black-Scholes model. We are in a position to state the main result.