这是一份northeastern东北大学(美国) MATH 7371作业代写的成功案例

$$

U_{1}=\partial_{1} W \cap U=\partial_{1} W \backslash D(-v)

$$

will be denoted by $(-v)^{\varkappa}$ and called the transport map associated to $(-v)$, so that

$$

(-v)^{\varkappa}(x)=E(x,-v)=\gamma(x, \tau(x,-v) ;-v) .

$$

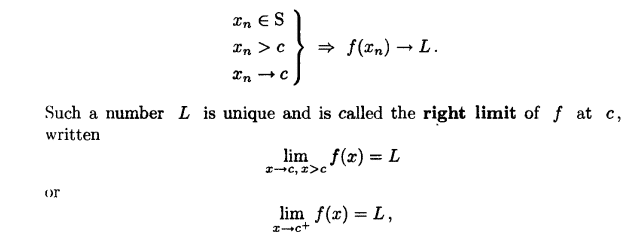

The transport map is a diffeomorphism

$$

(-v)^{\cdots}: U_{1} \stackrel{\approx}{\longrightarrow} U_{0}

$$

of $U_{1}$ onto the open subset $U_{0}$ of $\partial_{0} W$ :

$$

U_{0}=\partial_{0} W \cap U=\partial_{0} W \backslash D(v) .

$$

The inverse diffeomorphism is the transport map corresponding to $v$ :

$$

\vec{v}: U_{0} \stackrel{\approx}{\longrightarrow} U_{1} .

$$

MATH7371COURSE NOTES :

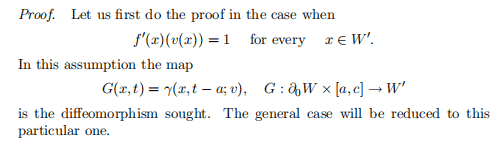

Proof. Let $W^{\prime}=f^{-1}([c, d])$. The Morse function

$$

f \mid W^{\prime}: W^{\prime} \rightarrow[c, d]

$$

has no critical points and the domain of definition of the functions $\tau\left(\cdot,-v \mid W^{\prime}\right)$ and $E\left(\cdot,-v \mid W^{\prime}\right)$ is the whole of $W^{\prime}$. The deformation retraction

$$

H: W_{1} \times[0,1] \rightarrow W_{1}

$$

is defined as follows:

$$

\begin{array}{ll}

H(x, t)=\gamma\left(x, t \cdot \tau\left(x,-v \mid W^{\prime}\right) ;-v \mid W^{\prime}\right) & \text { for } \quad x \in W^{\prime}, \

H(x, t)=x & \text { for } \quad x \in W_{0}

\end{array}

$$

The same formula defines a deformation retraction of $U$ onto $W_{0}$.